Source: The Conversation (Au and NZ) – By Lisa M. Given, Professor of Information Sciences & Director, Social Change Enabling Impact Platform, RMIT University

Roblox has announced significant changes to its gaming platform to enhance safety for children under 16.

The announcement comes just days after a man in the United Kingdom was jailed for 28 months for “obsessively grooming” a 14-year-old girl he met on the platform.

It also comes after the Australian government put Roblox on notice in February over ongoing concerns about online child grooming.

So what are the new safety features? And will they help keep kids safe online?

What are the changes?

Roblox is a massive virtual gaming universe which allows users to create, play and share games with others, globally. It has more than 150 million daily users and hosts more than 44 million user-created games.The new safety features will start in May in Australia (and June, globally). They’re designed to build on features the company introduced last year, including age assurance checks, making accounts private by default, and grouping users of similar ages.

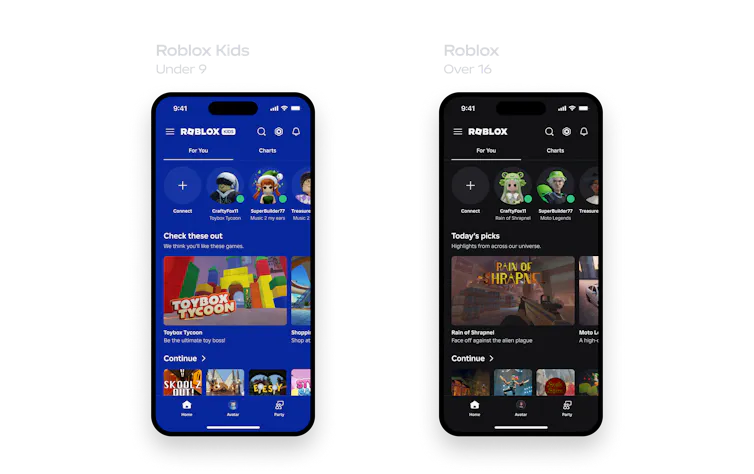

The company will introduce two new, age-based accounts: Roblox Kids for 5 to 8-year-olds and Roblox Select for 9 to 15-year-olds.

The accounts will have distinct background colours so parents can easily see what account their child is using. Users will be allocated to age-appropriate accounts through Roblox’s facial age estimation checks or via parental controls.

Roblox Kids and Select accounts share several features. These include having the chat function set to “off” by default in Australia (though chat will be “on” by default for Select accounts in most other regions).

While Australian Select accounts will gradually introduce chat for older children, both accounts will have parental controls to manage chat and block access to specific games for children under 13.

Once children turn 16 they will transition to Roblox’s standard accounts.

Successful age checks are crucial

In January, Roblox announced it would require age checks for users to access chat. It will now strengthen its approach to user age checks, using the same technology.

Access to content will be limited to a selection of minimal and mild-rated content, and with chat turned off, until age checks are complete.

Roblox says it will continuously monitor accuracy and require additional checks where player behaviour is inconsistent with the user’s registered age. Parents will be able to correct a child’s age where needed.

-

Developer verification requires content creators to either complete a formal ID verification or maintain links to a parent’s account, use two-factor authentication, and maintain an active, paid subscription to the new Roblox Plus accounts.

-

Real-time evaluation involves a real-time multimodal moderation system assessment to compare game content with Roblox’s rules, followed by gameplay by users over 16 to provide feedback and data on how people play the game before it’s made available to younger users.

-

Content eligibility where only content rated “minimal” or “mild” will be available in Roblox Kids, with “moderate” content introduced for older children in Roblox Select accounts. Any content tagged as “restricted” (for example, content that has graphic and realistic-looking depictions of violence or sexual themes) will only be available on Roblox’s full platform, for users 18 and older.

Changes to game classification

Roblox will also replace current content maturity labels with country-specific content labels under the International Age Rating Coalition. In Australia, the platform will use the Australian Classification Ratings.

This harmonisation is designed to make it easier for parents to identify age-appropriate content, using Australia’s current advisory ratings.

The new Roblox accounts are designed for children under 16. So they would exclude R18+ games, which will only be available to users 18 and older.

However, if games rated MA15+ are available on Select accounts, parents could decide to allow access for 15-year-olds.

Positive changes with some caveats

Roblox’s new account features and ratings are welcome.

But they show parents must be actively involved in managing children’s accounts, including enabling chat and assessing age-appropriateness of game content and features.

For example, the games and features included in each account will vary by region. So children may ask parents to add games to their accounts that are not included by default.

Parents may find age discrepancies between ratings when assessing games available in other countries. In the United States, for example, ratings include “Teen” (13 and older) and “Mature17+” (17 and older) that do not align easily with Australia’s PG, M, and MA15+ ratings. This means parents will need to carefully assess whether games are age-appropriate.

It’s also unclear if turning on the chat function in the new accounts in Australia will restrict chatting to others within the same age group, or whether parents can extend chat access to “trusted connections” in both accounts.

Currently, Roblox allows children under 12 to choose trusted friends, with parental approval. But children aged 13–17 can accept a friend request, directly. Creating trusted connections is not yet available in all countries. Even where it is, parents must always be extremely cautious when allowing children to chat with other people.

The inability of age assurance technologies to restrict social media accounts for as many as seven in ten children under 16 – due to age estimation errors and people’s ability to circumvent age checks – shows significant technical challenges.

Digital duty of care is needed

While some parents believe gaming apps such as Roblox should be included under Australia’s social media ban, the introduction of digital duty of care legislation is a better approach.

This would require technology companies to take steps to prevent foreseeable online harms – as Roblox is doing with their new accounts – and hold companies accountable for content and system design.

The government introduced, and later paused, digital duty of care legislation in 2024. But Minister for Communications Annika Wells has pledged the government will bring this to parliament this year.

– ref. Roblox is boosting safety features for young people. It’s a step in the right direction – https://theconversation.com/roblox-is-boosting-safety-features-for-young-people-its-a-step-in-the-right-direction-280360